|

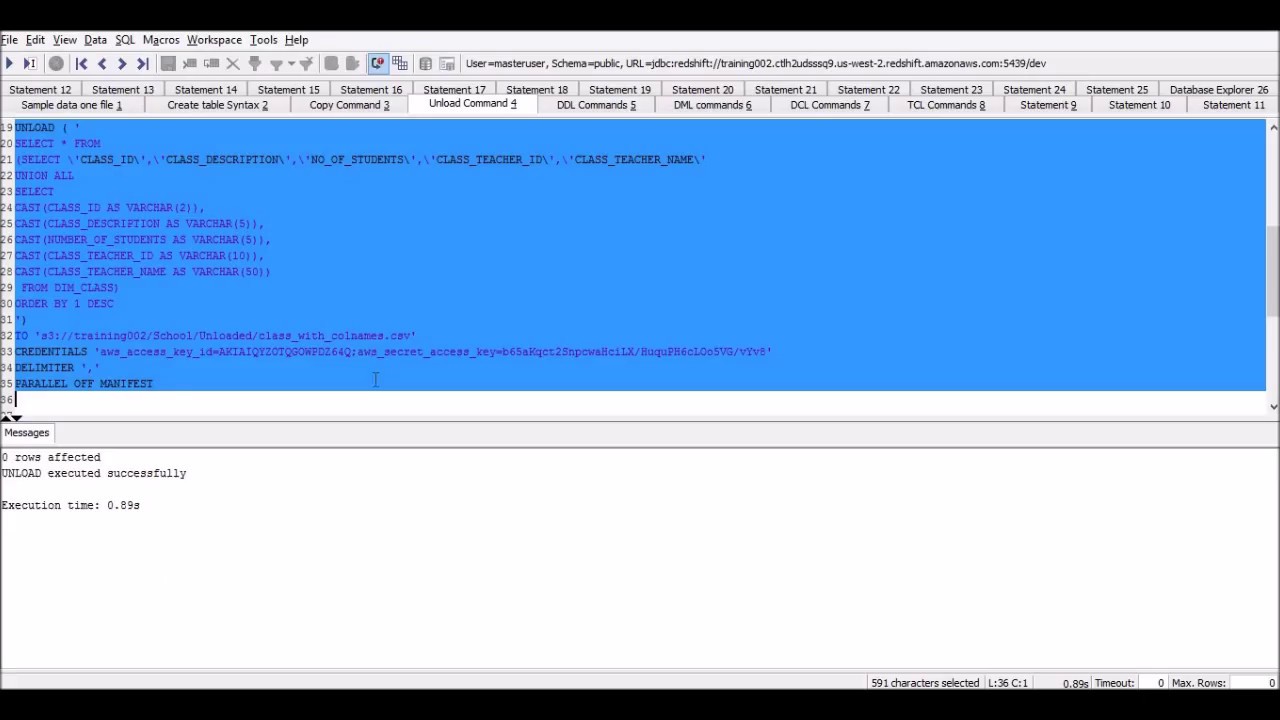

As an alternative you can use psql command line interface to unload table directly to the local system.įor more details, follow my other article, Export Redshift Table Data to Local CSV format. You cannot use unload command to export file to local, as of now it supports only Amazon S3 as a destination. Iam_role 'arn:aws:iam::123456789012:role/myRedshiftRole' Unload Redshift Table to Local System You should provide option HEADER to export results with header. Iam_role 'arn:aws:iam::123456789012:role/myRedshiftRole' Unload Redshift Query Results with Header There are various reasons why you would want to do this, for example: You want to load the data in your Redshift tables to some other data source (e.g. However, It is recommended to set PARALLEL to TRUE.įor example, unload ('SELECT * from warehouse') After using Integrate.io to load data into Amazon Redshift, you may want to extract data from your Redshift tables to Amazon S3. In order to unload results to a single file, you should set PARALLEL to FALSE. Unload Redshift Query Results to a Single File As unload command export the results in parallel, you may notice multiple files in the given location. The command will unload the warehouse table to mentioned Amazon S3 location. However, you can always use DELIMITER option to override default delimiter. ]įollowing is the example to unload warehouse table to S3. UNLOAD command is also recommended when you need to retrieve large result sets from your data warehouse. You can provide one or many options to unload command. With the UNLOAD command, you can export a query result set in text, JSON, or Apache Parquet file format to Amazon S3.

UNLOAD ('select-statement')įollowing are the options. You will have to use AWS CLI commands to download created file.įollowing is the unload command syntax. It does not unload data to a local system. Unload command unloads query results to Amazon S3.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed